You didn’t have to be alive in that summer of 1966, or even like most of the country glued to a black and white television, to recognise that catch phrase, it’s been recycled so many times since. It was the closing moments of the World Cup, with England poised for a dramatic victory over Germany, and as the referee fumbled for his whistle, fans began to invade the pitch in anticipation. BBC commentator Kenneth Wolstenholme noted the crowd, but with the score at 3-2 already in extra time, it was still feasible that a late shock could spoil the show. That's when Geoff Hurst hammered in the deciding shot that triggered the now famous punch line. It was spontaneous, unscripted, and entirely unpredictable, and sealed the moment in history with just three words.

Sport is one of those activities, whichever is your preference, that can produce moments of magic and despair in equal proportions entirely because it can never be entirely predicted or planned. That in itself is remarkable considering the immense preparation, research and training now done on almost any sporting venture. This places it alongside great music, arts and invention as human traits that are truly creative. In the words of the verbal genius, Murray Walker, ‘anything can happen - and usually does.’

I was reflecting on this conundrum after listening to yet another discussion on the place of Artificial Intelligence and it just seemed to me that here was a good example of its benefits and probable limitations. Imagine a computer bot doing a commentary on that football match. We would no doubt get a much better detailed description of tactics, positions and the trajectory of spherical objects. But would it deliver that final sentence that sealed the moment in people’s minds long after most of the match had been forgotten?

Well I’ll let you decide on that one. Personally I think it shows that both have something to contribute and that combined they make a much greater whole.

But it may be that simply going for manual input becomes too irksome or time consuming so that it is no longer first choice, or even a consideration. When AI becomes the go-to option it becomes a knee-jerk rather than a complete solution.

The pervasive use of the term AI covers a multitude of tasks as the phrase itself has become so familiar in use, especially as a marketing tool, that it is often used for actions that are not actually artificially generated.

So I make no apology for explaining this at some length because it may help clear some of the confusion, and throw some much needed light on more than the creation and manipulation of images. Intelligent processors are so much part of so many of the things we use daily it’s very important to clarify how they work, and why, sometimes, they don’t.

A good deal of what is now classified as AI through convenience is actually Machine Learning, and as I’ve probably been guilty of making this assumption in the past, this is a chance to correct an important misunderstanding. The two are quite different in the way they work, and while both have been around in computer circles from the early days, it is only in recent years, that AI has started to be the dominant force.

From the beginning computers were very good at making calculations - like Alan Turring the seemingly impossible Enigma code. Machine Learning recognises patterns to predict outcomes. The more it is given the more it learns. So it became possible for it to learn to play chess and anticipate moves on the board because it is a very logical game. By the same logic, predictive text became feasible - but only a word or two at a time. No giant leaps, or whole sentences , and often awkward failures. Without a clear path, it couldn’t take giant creative strides.

Facial recognition worked on the same principle - it could identify a particular face as long as it provided a recognisable profile - otherwise it was a dismal failure. But from these early lessons it was a comparatively short technical hop for search engines to find pictures of food, friends or cute kittens.

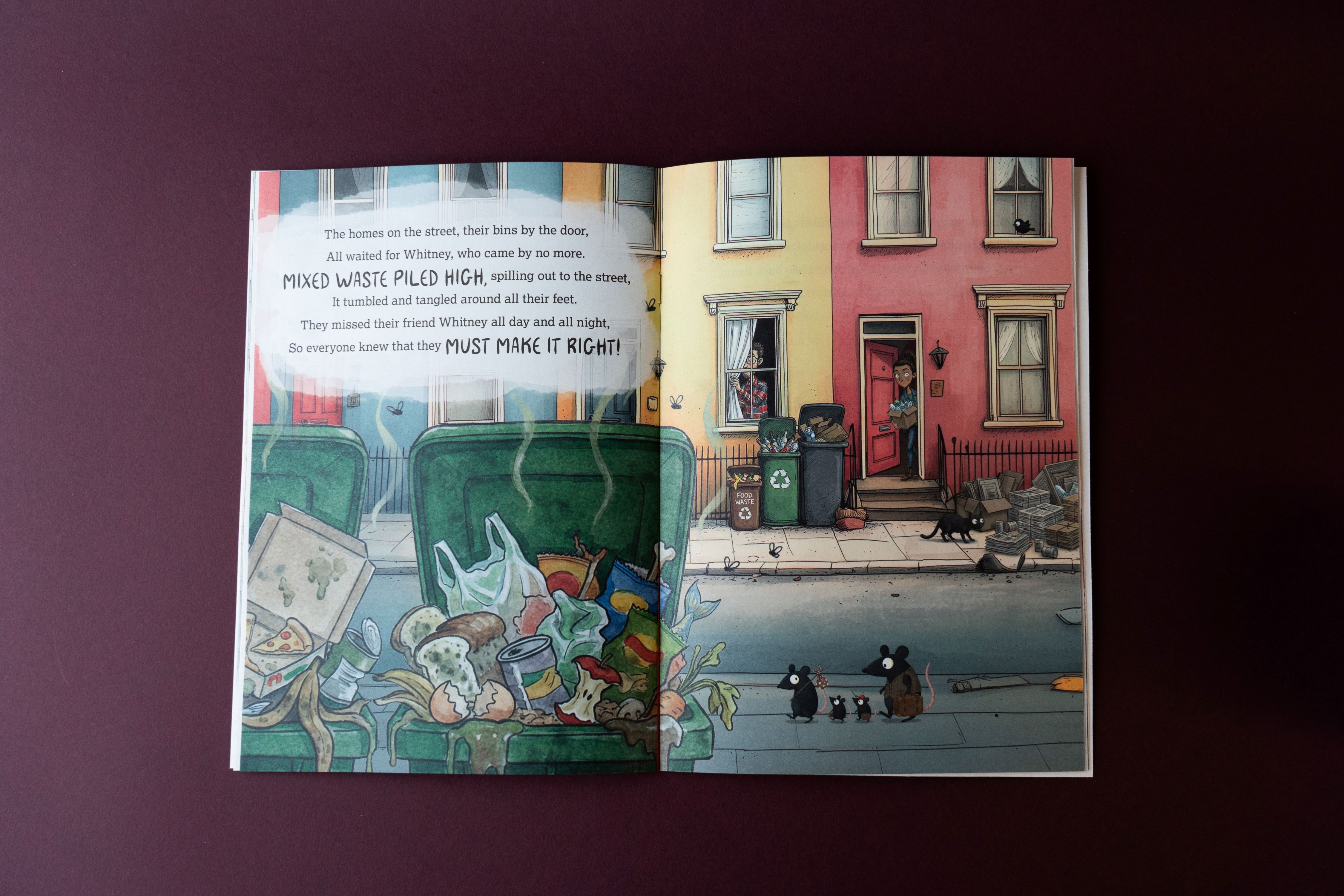

The only limitation on the machines ability to learn was the available resource from which to research patterns and put them together like matching pieces of a jigsaw. It was the very growth of popular digital imaging, and its transmission by social media, that made the next giant leap possible.

As human beings we learn from our mistakes as much as our successes. Computers can’t do that because they have no way to judge which is which without some formula that can be tested and proven. That’s why Artificial Intelligence feeds on the vast human resource of information available on the internet to draw examples, and direct its output. And it’s the fallibility of that resource that is the Achilles heel of AI when it comes to making more ambitious conclusions.

By breaking free of the restrictions of established patterns, AI doesn’t learn from mistakes, it can actually reproduce them if not held in check or supervised. That’s the important difference. It’s learning from example but unable in itself to test the integrity of its output without correction. Now we have Generative AI that is a massive leap of faith in the process as it is actually providing independently entirely new creations in words and pictures with limited reference to the original source. Not just predicting the next word, but dictating the next page.

In researching this column I discovered that there is a thing the IT experts call the ‘ black box problem’ which is an inability to understand actually how AI makes some of its apparently random judgments. I’m certainly no IT boffin but in simple terms it rather mimics a puzzle I illustrated in this column many years back being the black cat in the snow - an image which totally baffled the automated sensors of digital cameras as the colours - or absence of them - were outside of their electronic comfort zone. As photographers we learned to override the camera’s processing dilemma that there was no blue sky or green grass to work from: switches to manual.

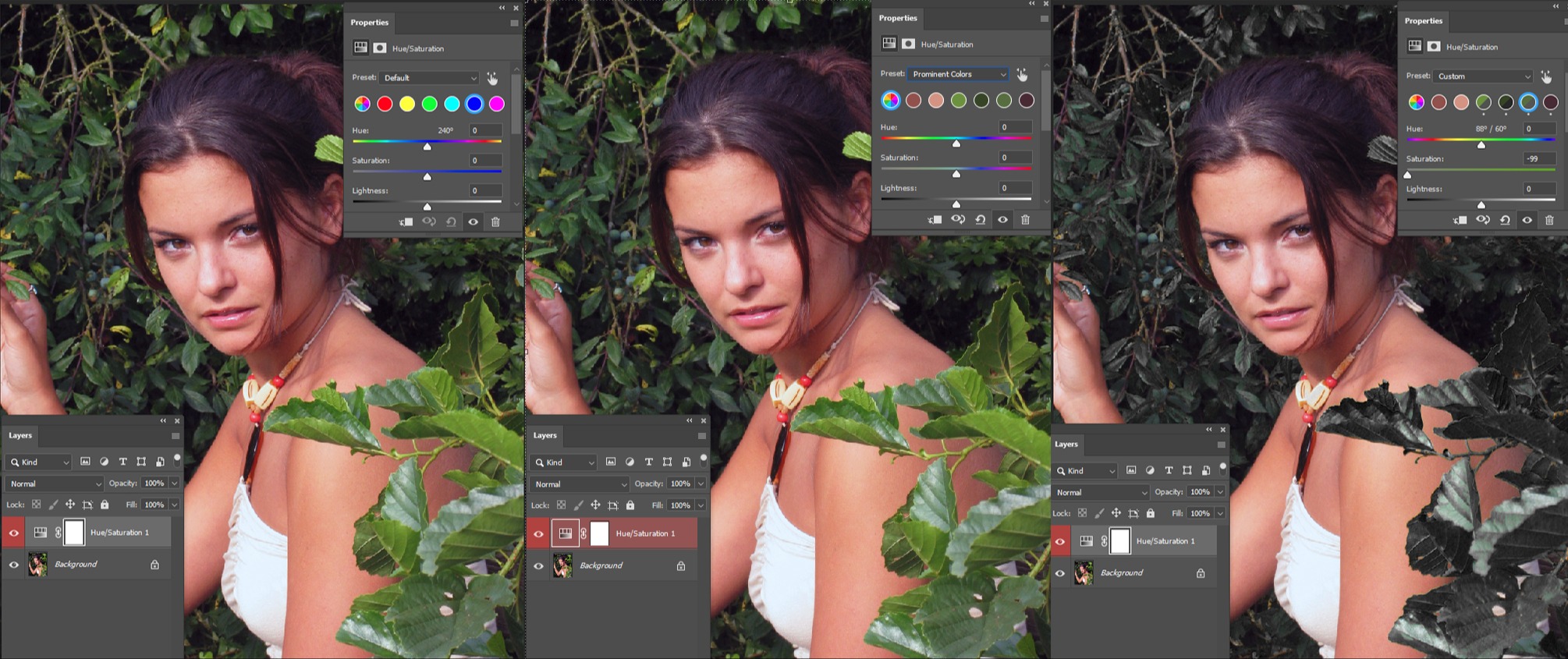

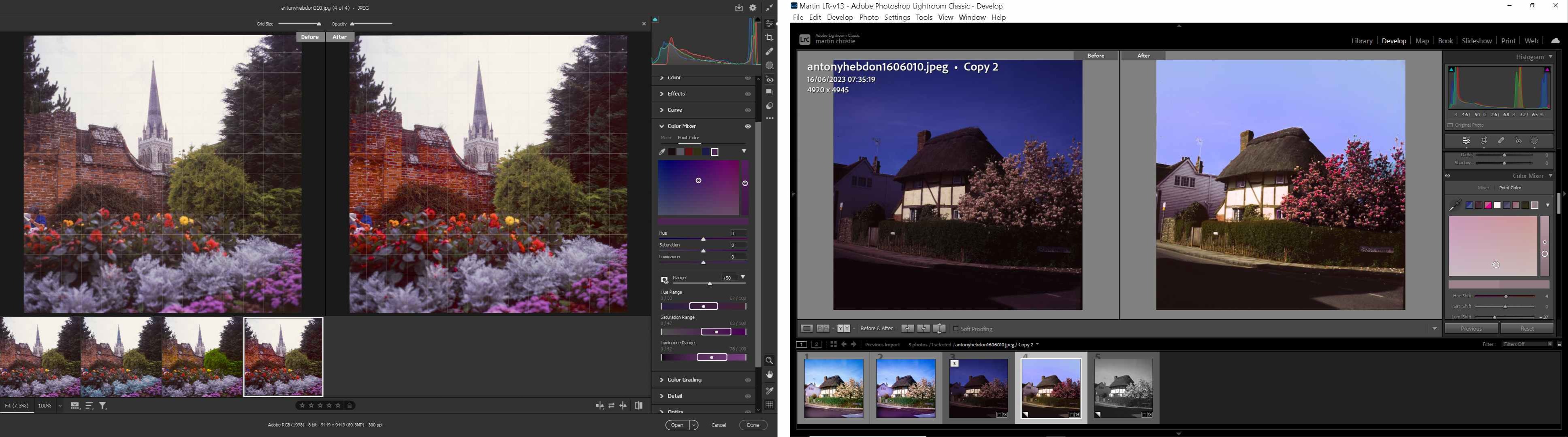

Last month I used the example of colour restoration of a family group, where the original photo had become badly faded through age. Without a little organic assistance, AI struggled to achieve acceptable results because it didn’t have enough reference to what the original colours would have been, and was creating new ones that looked too artificial. But there was one more thing I learned which didn’t register significance at the time. I use layers in Photoshop to blend in edits as seamlessly as possible but I noticed that the AI layer didn’t exactly match the perspective of the one below. I thought I had just made a slight mistake of the mouse and moved an anchor point but I’ve since learnt that AI works to specific aspect ratios - it was changing the shape all on its own.

That’s why some restorations don’t look quite right. It may not make any difference to a landscape, but people’s facial structures are quite specific as that’s why we, as humans, recognise friends and family in a crowd of others. So it’s a cautionary note if you are doing image repair for customers in order to produce print - be careful to check how closely the new version compares with the original in detail. We have always had the digital problem of producing what the customer believes to be the correct colour. AI editing opens up a whole new potential can of worms. Computers don’t have to answer for their mistakes - you are the one who as to take the rap at the front counter.

The caution also extends to files submitted that may have been doctored by person or person’s unknown. Because of computing demands images generated by AI - particularly on phones - may well be low resolution, or contain elements that are. They may look fine on the screen of a mobile, but in print will be rejected.

Of course it’s your job to notice that, not the customer! Just one more minefield to pick your way through in the print room. It’s all very well explaining that it the responsibility of the customer to provide suitable files but they will point out that you are supposed to be the expert not them. And in a world where you have to print a warning on a pizza box to remove packaging before eating it’s well worth covering your back.

One further important point is that as I have mentioned previously - and you should be aware - Adobe is now charging for generative actions on its subscription plan. You have a certain number of credits depending on your plan, but the debits vary considerably depending on which of several options you choose to employ, and they are not refundable if you, or the customer don’t like the results. So it can be a waste of time as well as money, and another reason why resorting to AI as first response may not be best practice.

One further important point is that as I have mentioned previously - and you should be aware - Adobe is now charging for generative actions on its subscription plan. You have a certain number of credits depending on your plan, but the debits vary considerably depending on which of several options you choose to employ, and they are not refundable if you, or the customer don’t like the results. So it can be a waste of time as well as money, and another reason why resorting to AI as first response may not be best practice.

There are alternatives as there are many more actions in PS that don’t rely on AI, but as mentioned earlier, machine learning, which uses an in-depth knowledge of the existing pixels to effect changes rather than create new ones. Content aware is a perfect example of this, it does exactly what it says on the tin, and if you don’t like the results you can use it as a work around until you come up with a compromise you do.

As mentioned, machine learning works by recognising patterns, so selective editing becomes so much easier and faster than previous purely manual methods as it is not trying to paint over an image with a crude brush, but separate its pixels in minute detail because it can. It has become much improved and refined over the years because of the wealth of examples fed into the system by the umbilical cord of the internet.

This is where the professional, using a progamme like Photoshop, will continue to have an advantage over the instant fix solutions available to the general public as they will increasingly be entirely AI based because it is a simple solution rather than an accurate one. I’ve certainly learned more tricks in PS over the years than I can immediately recall but next month I’ll try and recall some for those who prefer to do more than press a single tab.

They may think it’s AI all over. It’s not yet!

.jpg)